1. Introduction#

1.1 Recall is not interpretation. Interpretation can be measured.#

AI is moving from a tool a person uses to an agent that acts on a person's behalf, and that shift changes what "memory" must do for a specific individual. State of the art AI memory has been optimizing for recall as the success metric. The four leading systems (Zep, Letta, Mem0, and Supermemory) compete on standard recall benchmarks such as LOCOMO and LongMemEval, reporting accuracies in roughly the 70% to 93% range depending on provider, model, and benchmark variant (§2.2). Optimizing further on recall leaves something more fundamental unmeasured. This research paper explores how recall is one part of memory, and how the function of memory is dictated by how an individual processes the facts and experiences of their life.

We use interpretation to refer to this human-side property: the way a specific person processes facts and experiences into judgments, decisions, and reactions. Viewing situations from different lenses can lead to entirely different interpretations of the same set of facts. This has been shown across the human experience, from the sciences to religion, and by extension to the relative experiences of any individual; memory is deeply personal. For an AI to serve a specific person, it must be given context on the framework that person uses to reason, not just the raw facts or information itself. Throughout this paper we use the term Behavioral Specification to refer to a static document that extracts and encodes a person's behavioral patterns; the operational definition is developed across §3.7. The Behavioral Specification is the artifact that captures this interpretive framework, and is provided to an AI as context.

We introduce representational accuracy as the corresponding AI-side property: how well a system's internal model of a specific person captures their interpretive patterns. It is not recall, preference matching, or persona consistency. It is a distinct property of the AI system, and state of the art memory benchmarks do not isolate it. Prior work closest to this axis (Twin-2K for scaled behavioral prediction, PersonaGym for persona fidelity, AlpsBench for preference alignment) measures related properties but not the transfer of a person's interpretive patterns to new situations the system has never seen. §2.1 positions each benchmark against what this paper measures, and Appendix F develops the scope differences in detail.

The core hypothesis of this research is that representational accuracy of a person's interpretation improves an AI system's behavioral alignment with that person. This is the operational primitive for any AI system meant to act on a person's behalf: the system's behavior can only match the user's reasoning to the extent the system represents that reasoning accurately. The operational test in this paper is behavioral prediction on held-out situations: given a situation drawn from text the model has never seen, the model generates how the subject would respond; the response is scored by a panel of calibrated large language model (LLM) judges against the subject's own verbatim response in the held-out text on a 1-5 interpretive rubric (§3.3). Accurate prediction on held-out text is evidence that the representation captures the subject's recurring patterns of reasoning, distinct from the facts and stylistic surface that current extraction pipelines already produce. The design also reduces the risk of sycophancy1: the answer is checked against the person's narrative, which the model has never seen, not against anything the user says during the conversation. The held-out test is one operationalization of the hypothesis.

We test this hypothesis on the leading state-of-the-art AI memory systems and on a diverse set of 14 autobiographies from authors across the world. For this initial examination we use baselined and calibrated LLM judges to evaluate the performance of each memory system, on its own and in combination with a Behavioral Specification: a static document that extracts and encodes a stable representation of a corpus's behavioral patterns. The specification captures the recurring patterns in how the subject reasons, drawn from the shape of judgments and reactions across the corpus (for example: "spiritual integrity over social cost...", "reform through love...", "hierarchical deference..."). A walked example of the audit chain from such a pattern back to its grounding facts and source passages appears in §2.3.

Defined terms used throughout the paper are collected in Appendix H for reference.

1.2 What we tested#

We tested the Behavioral Specification across 14 historical subjects, each with a public domain autobiography. For every subject we split the source corpus in half: the training half was used to generate the specification, to seed each memory system, and to provide the retrievable fact pool. The held-out half was used only to produce behavioral prediction questions and was never shown to the response model, the language model being asked to predict how the subject would respond. The Behavioral Specification is the context document that the response model receives. The set of held-out questions for each subject is the question battery (size and composition per subject in §3.5). The test was whether each system, under each tested condition, could predict how that specific person would respond in situations drawn from text it had never seen.

The Behavioral Specification itself is built from the training-half corpus through an extraction-and-authoring pipeline (§3.7). The pipeline distills the recurring patterns of how the subject reasons into a single structured document, typically around 7,000 tokens (~5,000 words) long. That document is what the response model receives as context when asked to predict how the subject would respond.

Hypotheses. The study tests five claims about how a representation of a person shapes AI behavior on that person's behalf:

- H1. A response model given a Behavioral Specification produces responses that align with the person's documented behavior more closely than the same model given no context, facts retrieved by a memory system, the full extracted fact list, or the raw source corpus (§4.1).

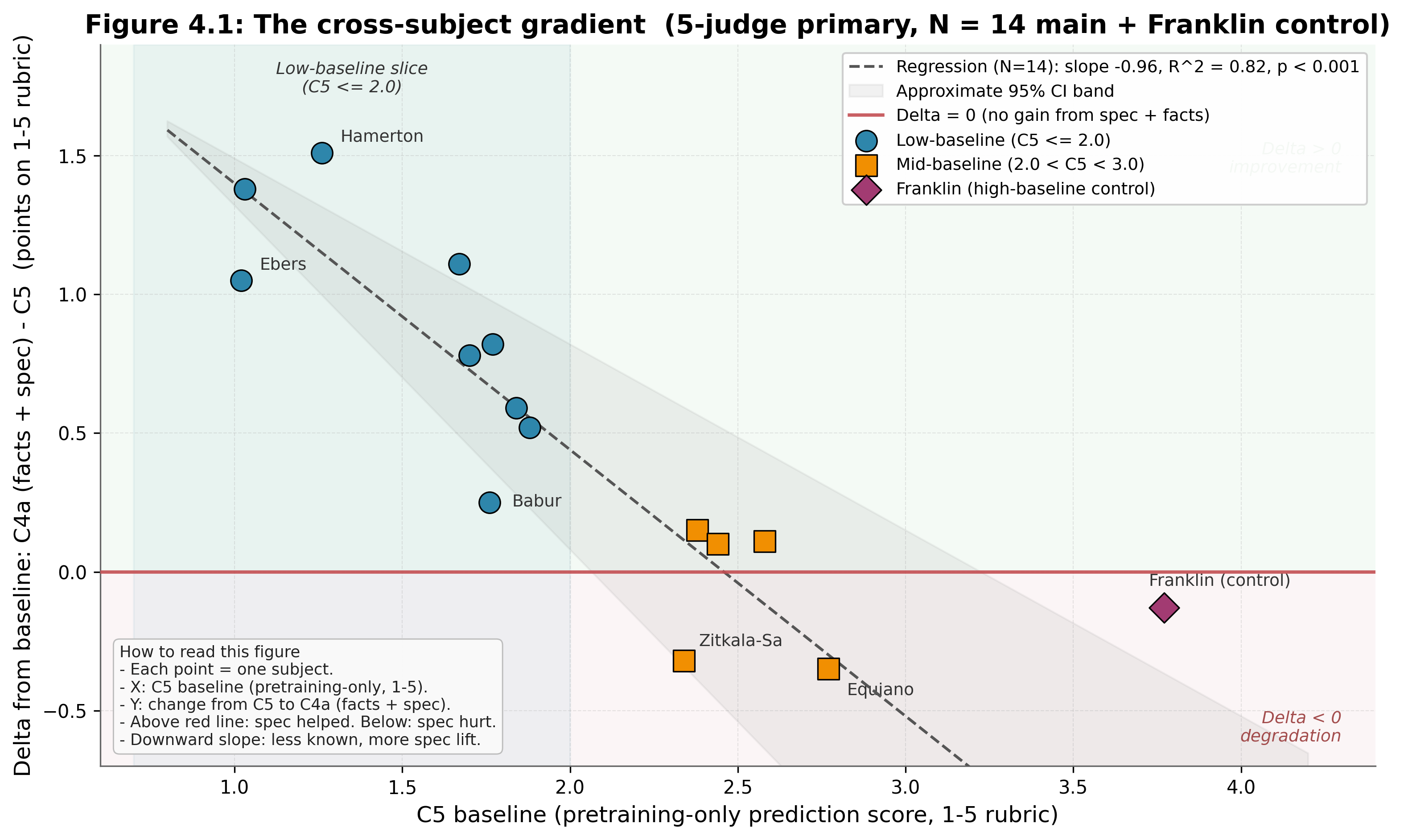

- H2. The specification's benefit is inversely proportional to the response model's pretraining coverage of the person. Its effect is largest on people the model does not already know (§4.1).

- H3. The benefit comes from the content of the correct specification for the correct person, not from the mere presence of a structured prompt. A random other person's specification, applied in its place, produces a substantially smaller and content-specific effect than the matched specification (§4.3).

- H4. The specification interacts with memory-system retrieval in a structured way that depends on the type of question being asked. Aggregate effects on each memory system reflect the balance of these per-question patterns and shift with retrieval architecture (§4.4).

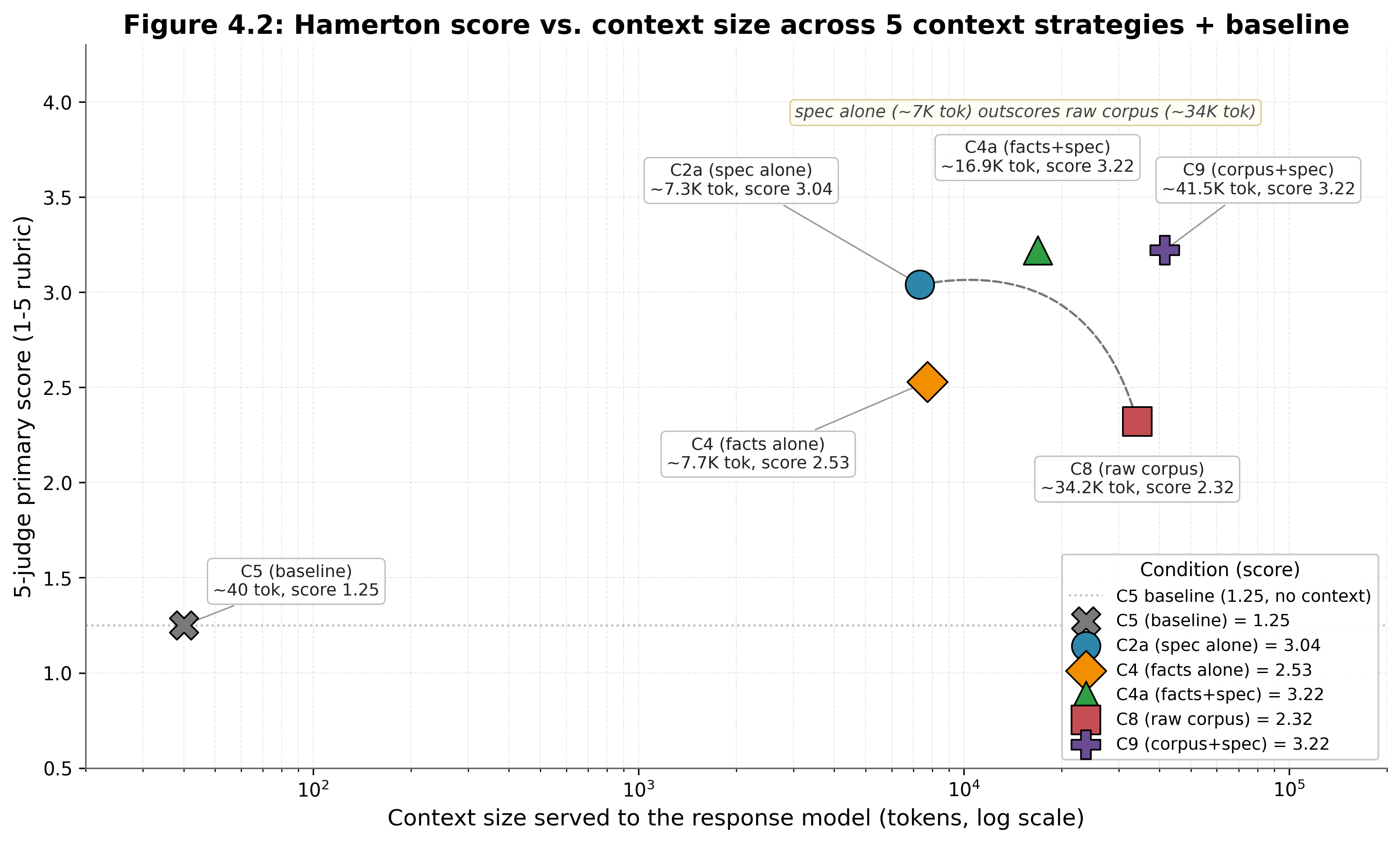

- H5. The Behavioral Specification's quality advantage is also a compression advantage: a ~7,000-token (~5,000-word) specification recovers most of the predictive accuracy of an 80-400K-token (~60-300K-word) raw corpus (§4.2).

Post-hoc analyses surfaced during the work are reported alongside these results.2

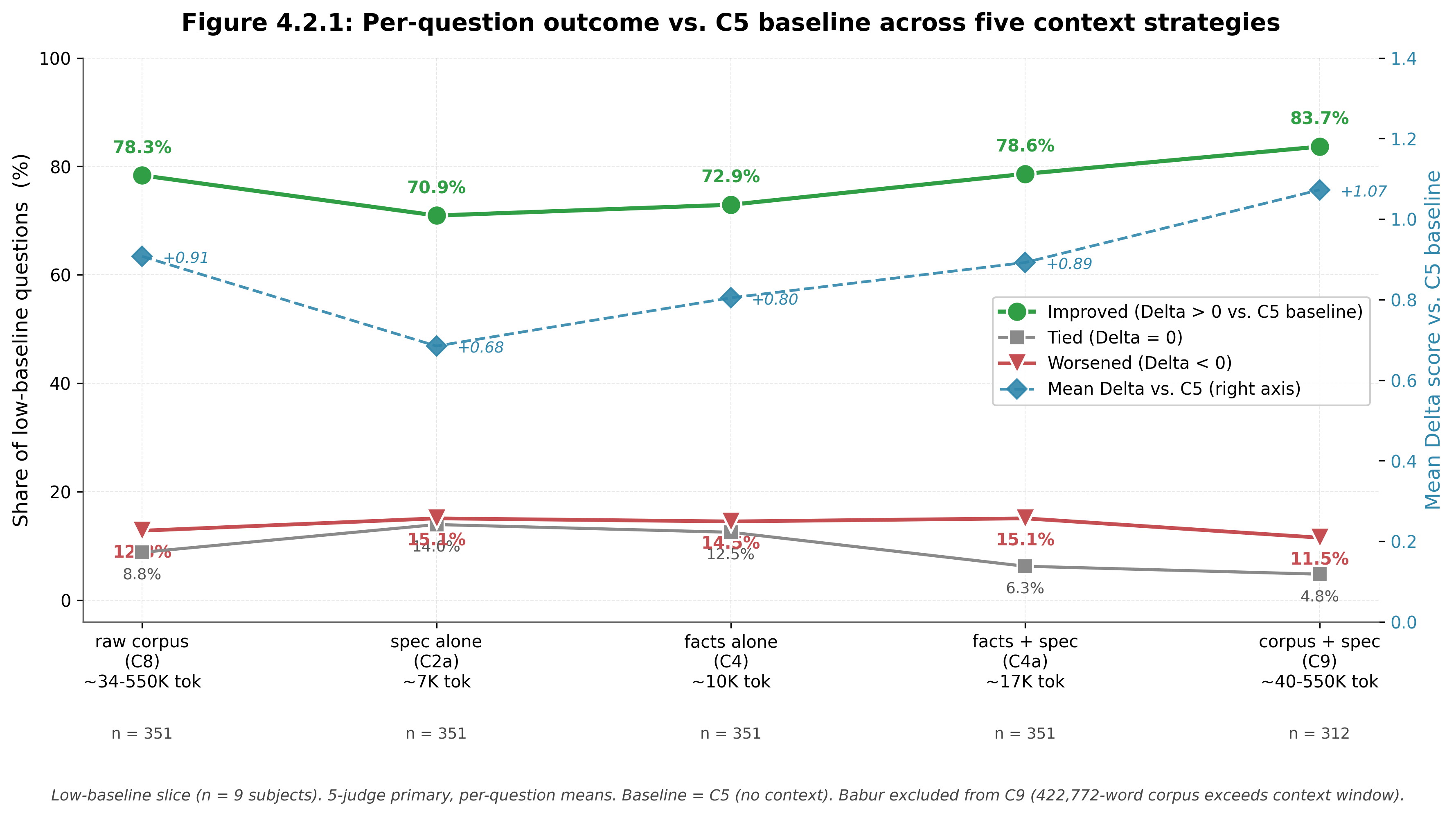

Primary and secondary outcomes. The primary outcome is the mean prediction score on the 1-5 rubric across a 5-judge primary panel (§3.3).3 Cross-subject claims are calculated subject-by-subject before averaging, so they are not driven by subjects with larger question batteries. As a secondary outcome, we report the per-question improvement rate: how often a context condition helps relative to the comparison baseline (§4.2.1), not just by how much it helps when averaged. The per-question secondary outcome is informative because the Spec's effect is conditional on question type: aggregate effects reflect the balance of interpretation-heavy items (where the Spec lifts most) and literal-recall items (where retrieval already suffices). The formal proposal and failure-mode analysis for the secondary outcome are in §4.2.1; full operational details for both outcomes are in §3.3.

Each memory system is tested in both a controlled configuration (identical pre-extracted fact pool) and a native configuration (the provider's own ingestion pipeline); design detail in §3.2. Running in parallel across both is the Behavioral Specification, tested alone and layered on top of each configuration. Every meaningful combination of inputs is evaluated as its own condition:

| Group | Condition | Inputs given to the model | Purpose |

|---|---|---|---|

| Direct context manipulations | No context (C5) | Nothing. The model answers from pretraining alone. | Pretraining baseline. Measures what the model already knows about the subject from public sources. |

| Specification alone (C2a) | The Behavioral Specification, with no retrieval, no facts, and no corpus. | Tests whether structure without retrieval is sufficient on its own. | |

| Wrong-specification control (C2c) | A different subject's specification applied to this subject. Two variants: an adversarial fixed pairing (v1) and a random derangement (v2).4 | Tests whether the effect is driven by the content of the correct specification, or by the mere presence of structured prompting. | |

| All facts, no specification (C4) | Every extracted fact for the subject, loaded into context at once. | Tests whether information sufficiency alone drives prediction, independent of structure. | |

| Facts + specification (C4a) | Every extracted fact plus the specification. | Combines full information and structure to test the upper bound of context-provided prediction. | |

| Raw corpus, no specification (C8) | The full training-half corpus loaded into context. | Tests whether unstructured source text can substitute for an interpretive representation. | |

| Corpus + specification (C9) | Raw training corpus plus the specification. | Tests whether structure is additive to unstructured source text. | |

| Memory-system configurations (controlled, all 5 systems) | Retrieval alone, controlled (C1) | Top-k facts retrieved by each memory system (Mem0, Letta5, Supermemory, Zep, Base Layer) from the shared fact pool. | Tests retrieval sufficiency, and whether providers converge on which facts are most relevant given identical input. |

| Retrieval + specification, controlled (C3) | Memory system retrieval from the shared fact pool, plus the specification. | Tests whether the specification layers cleanly on retrieval when the input is held constant. | |

| Memory-system configurations (native, 4 commercial systems) | Retrieval alone, native (C1 native) | Top-k results from each memory system's own ingestion pipeline operating over the raw training corpus. | Real-world comparison of each memory system's full ingestion-plus-retrieval stack. |

| Retrieval + specification, native (C3 native) | Memory system's own ingestion and retrieval, plus the specification. | Tests whether the specification improves the real-world deployment of each memory system. |

The 14 subjects span four continents and roughly two millennia of written human experience. Ordered chronologically: Saint Augustine (North Africa, 4th-5th c.), Bābur (Central Asia and India, 15th-16th c.), Bernal Díaz del Castillo (Spain and Mexico, 15th-16th c.), Benvenuto Cellini (Italy, 16th c.), Jean-Jacques Rousseau (France, 18th c.), Olaudah Equiano (West Africa and Britain, 18th c.), Mary Seacole (Jamaica and Britain, 19th c.), Elizabeth Keckley (United States, 19th c.), Yung Wing (China and the United States, 19th c.), Philip Gilbert Hamerton (Britain, 19th c.), Fukuzawa Yukichi (Japan, 19th c.), Georg Ebers (Germany, 19th c.), Sunity Devee (India, late 19th c.), and Zitkala-Ša (Yankton Dakota, early 20th c.). Source corpora range from 25,231 words (Hamerton) to 422,772 words (Bābur). Full source references are in §3.3.

Predictions were scored on a 1-5 rubric where the integer anchors mark categorical shifts in answer quality (full rubric and verbatim judge prompt in §3.3, summarized in the table below). Crossing an integer anchor represents a real change in the kind of answer the model produced, not a small numerical adjustment. For example, a move from 1.8 to 2.4 crosses the 2.0 boundary: the model goes from refusing the question or producing an off-base answer (anchor 1) to engaging with the question even when the prediction is still wrong (anchor 2). Absolute point gains, not percentages, are the informative metric for cross-subject comparison.

| Score | Anchor (verbatim) | Shift from previous anchor |

|---|---|---|

| 1 | Refuses or off-base | (rubric floor) |

| 2 | Wrong prediction | From cannot engage to engaging with the question |

| 3 | Right domain wrong outcome | From wrong prediction to in the neighborhood |

| 4 | General direction correct | From in the neighborhood to right direction |

| 5 | Predicts specific outcome | From right direction to matching the held-out text |

Score interpretation, including the cross-anchor rule for fractional scores (e.g., 2.5, 3.4), is in §3.3.1. Example questions per subject and panel composition are in §3.3.2.

The baseline we refer to throughout is the no-context condition (C5): the response model's score with no external information. Low-baseline subjects are the population of relevance: people the model has insignificant pretraining understanding of, even when fragments of their digital footprint exist in training data. High-baseline subjects are the opposite, people the model already knows about from pretraining. Almost everyone in the active human population falls into the low-baseline band; even people with substantial public output captured in training corpora have only fragments of their reasoning represented. The low-baseline band is the rule, not the exception.6 Results are reported separately on the low-baseline slice (n=9) alongside the full 14-subject analysis.

The study is structured into two tiers. Tier 1 (main study) uses Claude Haiku 4.5 as the response model across all 14 subjects on every condition. Tier 2 is a smaller cross-provider directional probe (§3.6, §4.6.1). The 7-judge panel spans three providers; the 5 non-Gemini judges form the primary aggregate and the 2 Gemini judges are reported as a sensitivity check (§3.3.3).

Together these hypotheses test whether a Behavioral Specification can move a language model toward acting in alignment with a specific person.

1.3 What we found#

The Behavioral Specification (referred to as the Spec in the discussion that follows) acts as an interpretive layer. The Spec's benefit is largest where the model knows the person least, and the mechanism is per-question. On questions where the model needs an interpretive frame and lacks one, the Spec categorically improves the answer produced. On questions where the model already has the answer, the Spec adds nothing and sometimes hurts.7 What follows are seven findings, beginning with the cross-subject gradient (primary outcome) and the per-question mechanism beneath it. The thread is alignment: how accurately a model predicts a specific person's reasoning is the operational measure of how closely it can act in alignment with that person.

Headline findings.

- Gradient. Every low-baseline subject improved with the Spec; mean lift +0.89 points on the 1-5 rubric, 78.6% of individual questions improve.89 Across subjects, the Spec's benefit is largest where the model knows the person least. The gradient comes from the per-question mechanism described next: low-baseline subjects have more questions where the model lacks an interpretive frame, and therefore more questions where the Spec lifts. Detail in §4.1.

- Per-question interpretive lift. The Spec moves 55% of low-baseline questions across at least one rubric anchor upward; 18% cross two or more. On individual questions where the model needs an interpretive frame and lacks one, the Spec categorically improves the answer produced. Crossing one rubric anchor moves a response from "wrong prediction" to "right direction with specifics." Crossing two or more anchors is a bigger jump: a single question where the model moves from refusal or generic guessing to a recognizable, person-specific response. 5.9% cross three or more anchors. The pattern holds across Spec-only, facts+Spec, and corpus+Spec conditions on the low-baseline subjects. Detail in §4.1, §4.2.

- Compression. The Spec recovers 76% of what the corpus delivers at 23× less context. A 7,000-token structured representation matches most of the predictive accuracy of a 163,000-token raw corpus. The Spec selects and structures the behavioral signal; the interpretive layer drives the result, not the volume of context. Spec-alone +0.71 vs. corpus-alone +0.93 over baseline. On Hamerton (smallest corpus tested), the Spec scores higher than the raw corpus (2.63 vs. 2.27). Detail in §4.2.

- Content specificity. The wrong Spec drops accuracy below the no-context baseline (Δ = −0.25); the correct Spec lifts accuracy above (Δ = +0.35). What produces the lift is the content of the correct Spec for the correct person, not the presence of a structured prompt. Random pairings (a wrong Spec assigned by chance to a different subject) sometimes still produce predictions that align with the held-out text, suggesting some behavioral patterns transfer across subjects, but the correct Spec consistently outperforms. Random-derangement Δ = +0.15. Detail in §4.3.

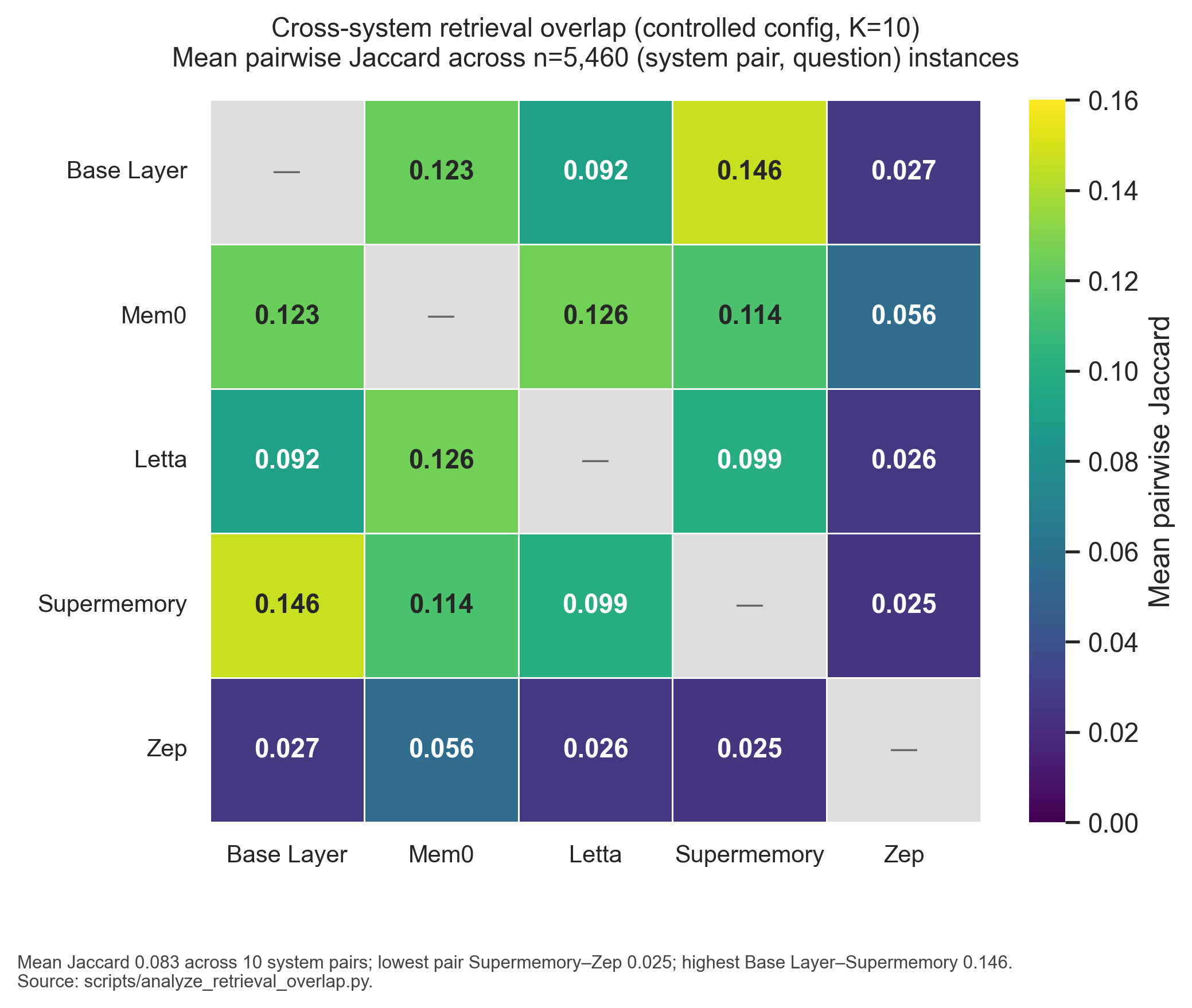

- Memory-system layering. The Spec lifts 3 of 4 commercial memory systems on aggregate; per-question anchor crossings range 20–36% across systems. Layered on top of commercial memory systems, the Spec helps dramatically on interpretation-heavy questions and reduces refusal rates on questions retrieved facts could not ground. It also exposes per-question structure the aggregate hides: some questions improve, some regress, and the balance shifts with retrieval architecture. Mem0, Letta, and Zep show positive aggregate lift; Supermemory does not. Detail in §4.4.

- Hedging reduction. The Spec collapses baseline hedging from 41.2% of responses to 0.4%.10 The reduction is content-specific, not prompt-driven: under the wrong-Spec adversarial control, the model continues to hedge or explicitly flag the mismatch on 60.6% of responses (§4.3). Where the Spec content matches the subject, the model commits; where it does not, the model abstains. On low-baseline subjects, where the model would otherwise refuse to engage, the matched Spec converts refusal into substantive response. This is the gradient operating at its floor. Detail in §4.1.1 (abstention versus non-abstention misalignment decomposition) and §4.3 (wrong-Spec content-specificity).

- Retrieval divergence. Given identical input, memory-system providers share zero top-10 facts on 35.9% of question-pairs; mean pairwise overlap is 8.3%. On standard recall benchmarks like LongMemEval and LOCOMO, the four commercial memory systems we tested perform within a few percentage points of each other. Yet on which facts to surface as most relevant, they substantially diverge. Convergence on top-K under identical input would have been evidence of a shared interpretive framework; the systems do not converge. On 65.6% of (system pair, question) instances they share one or fewer facts. Detail in §4.4.1.

Mechanism: three patterns of interaction with retrieval (full development in §4.4.3). Baseline runs suggest the model already attempts shallow inference from a user's raw data on its own; the specification makes that inference inspectable and structured.

- Pattern 1, Interpretation-heavy questions. The specification supplies a generalized pattern from the source that has to transfer to a new situation; retrieved facts alone are not enough (Fukuzawa Q26).

- Pattern 2, Literal-recall questions. Retrieval already returns the plain answer; the specification's interpretive framing drifts past the question and negatively impacts the response (Yung Wing Q5).

- Pattern 3, Refusal-triggering questions. When the Spec supports refusing without enough information (not all specs do), the model produces principled refusals aligned with the Spec; the content-match rubric still scores them as off-base (Zitkala-Ša Q18).

Robustness across providers. We varied both the question-battery generation model and the response model across providers; the Spec direction reproduces. Detail in §4.6.1.

Exploratory note: Letta stateful-agent path. Letta's stateful-agent architecture self-edits a persistent memory block during ingestion. On 3 subjects (post-hoc), it scored above Base Layer's unified-brief specification at matched response model. At the largest corpus tested, the block grew to ~335K characters with 25% verbatim sentence duplication and 35-56% semantic near-paraphrase duplication, indicating an architectural ceiling at scale that does not apply to the unified-brief specification. Case study in §4.5 / Appendix G.

1.4 What this implies#

AI is becoming a broadly used technology, comparable to email or mobile phones in how widely it touches daily decisions. The population of relevance (§1.2) is anyone who uses or will use an AI system. Even the autobiographers in this study, people whose work is in pretraining and who should technically be known to the model, score near the rubric floor in the no-context condition. The 14 main-study subjects are also a single-genre sample (autobiography is reflective, retrospective, audience-aware); cross-genre generalization is flagged as future work (§6.1, §7.2). On a frontier model serving the general population, the typical user sits even deeper into the low-baseline band than our subjects.

The gap the Behavioral Specification fills cannot be closed by training a larger model on more public data. The private record does not exist in a form a training corpus can capture; even where fragments exist, they are scattered across formats and channels and cannot be reliably reassembled into how a specific person reasons. The structural options for what fills this gap are narrow:

- Each person supplies their own representation to whatever AI system serves them. The Behavioral Specification is one implementation of this option, not the only one.

- Personalization remains surface-level (style, voice, preference, demographic inference, observable behavior), addressing the layer current memory systems already cover but missing the interpretive framework that lets an agent act on a specific person's behalf.

- AI systems infer a representation of the user from observed interactions, building it opaquely, without explicit input from the user or the ability for the user to inspect or correct it.

What this paper claims is that personalization infrastructure of the first shape (user-held, portable, inspectable, traceable, representation-grade) is what the next generation of human-AI interaction will require, especially as agents begin acting on people's behalf. §5 is an extended discussion of these implications; §7 develops the safety, alignment, and deployment implications.

2. Prior Work, Industry Benchmarks, The Fifth Target#

AI memory and personalization research today is organized around four measurement targets: recall of stored facts, survey-response prediction, persona fidelity, and preference alignment. Each is supported by its own benchmark family and its own line of system design. None of them measures whether an AI system has an accurate internal model of how a specific person reasons. This paper proposes a fifth target, representational accuracy, and uses behavioral prediction on held-out reasoning situations as its operational test. The remainder of §2 walks the four existing targets, names the benchmarks attached to each, and positions the fifth alongside them.

Memory systems today optimize for recall. Recall-optimized efforts include both neural-memory-analogue systems (architectures that borrow from human memory engineering: episodic consolidation, working-memory slots, retrieval over embeddings) and the broader class of vector-retrieval and embeddings-based commercial memory providers (Mem0, Zep, Supermemory, Letta). These systems do store and retrieve information for a specific user, but the property they are designed and benchmarked for is recall accuracy on standard benchmarks, not how accurately the system represents that user's reasoning. The optimization target is general by construction; any individual user's interpretation is not what these systems are measured against. A separate body of research, cognitive-representation research, studies human reasoning itself: how people form representations of others, how schemas compress experience. The gap between these directions is the translation: applying what we know about human reasoning to the direct interaction between an AI system and a specific individual, and shaping the system's internal model of that individual in a way that serves them rather than serving an average.

Language models are trained to produce responses that are helpful on average across a large population of users. That optimization target produces outputs that no single user is the reference point for. Personalization requires the opposite property: a system whose outputs are tuned to a specific individual rather than to a population aggregate. That kind of intentional individual-specificity, not "bias" in the negative sense but an explicit design target, is the missing thread in current AI memory and human-AI interaction research.

Personalization in this paper's sense. "Personalization" in current AI research typically means responsiveness to stated preferences (dietary restrictions, communication style) or stored facts about the user (location, occupation, history). Both are useful and both live at the surface of the user. We use "personalization" in a stronger sense throughout this paper. We mean representing the interpretive layer that sits beneath stated preferences and biographical facts: how a specific person organizes experience, what they treat as evidence, what reasoning patterns they apply across new situations. Preferences and facts are downstream artifacts of that interpretive layer; the layer itself is what produces them. The behavioral prediction battery and Behavioral Specification described in §3 instantiate personalization in this deeper sense, and §5 returns to what this layer is and is not.

2.1 Memory and personalization benchmarks#

This subsection walks each of the four existing targets in turn, naming the benchmarks attached to each and their scope. Representational accuracy is positioned as the fifth target at the end of the walk. An extended benchmark-by-benchmark analysis is in Appendix F.

Recall measures retrievability of facts, not reasoning about them. LOCOMO (Maharana et al., ACL 2024, arXiv:2402.17753) measures conversational-memory quality: after a multi-session conversation, the system is asked questions like "what did the user say about their job on day 3?" and scored on fact retrieval. LongMemEval (Wu et al., ICLR 2025, arXiv:2410.10813) measures long-term memory across multiple sessions on five capability dimensions (single-session, multi-session reasoning, temporal reasoning, knowledge updates, abstention) and is heavily recall-weighted. A system can saturate recall on such benchmarks and still fail behavioral prediction, because retrieval answers the question "can the fact be found" rather than "does the system know how the person reasons about the fact." Recall is a necessary property for most downstream uses of memory but it is not sufficient for representational accuracy.

Survey-response prediction infers how a person would answer one questionnaire item from how they answered others. Twin-2K (Toubia et al., 2025, arXiv:2505.17479) does this for 2,058 participants on a 17-task heuristics-and-biases battery; items share a common format (multiple choice, Likert scale, numeric), scored by distance-based accuracy. Twin-2K's stated target is prediction accuracy on survey interpolation: the model is scored on how well it predicts a held-out questionnaire response, not on whether it represents the underlying reasoning that produced the response. Our target is representational accuracy on a cross-format task: autobiographical prose input, open-ended behavioral prediction output, rubric-based scoring against a verbatim held-out passage. The structured-questionnaire format and the open-ended behavioral reasoning this paper studies measure different properties. A system could perform well on Twin-2K and not on our battery (survey interpolation does not require modeling reasoning transfer to new contexts), and a system could perform well on our battery and not on Twin-2K (accurate reasoning representation does not guarantee survey-format numerical accuracy). The two benchmarks diagnose different properties of the same general capability.

Persona fidelity measures whether a model stays in character across the back-and-forth of a conversation.11 PersonaGym (Samuel et al., Findings of EMNLP 2025, arXiv:2407.18416) scores consistency with a described persona during conversation: given a one-line persona ("You are a 45-year-old skeptical accountant from Toronto"), the model is scored on whether its multi-turn replies stay in-character, graded against a held-out criterion set. In practice the model is checked for consistency with the persona's surface attributes (skeptical, accountant-flavored responses; not breaking character into a different age or profession), not for whether it reproduces how a specific person would reason. PersonaGym's one-line descriptor is a substantially shallower input than this paper's ~7,000-token Behavioral Specification or Twin-2K's full-text survey persona;12 consistency with it does not require modeling that person's reasoning on new situations. PersonaGym measures a useful property (holding voice over a conversation); fidelity to a one-line persona is a weaker condition than representational accuracy.

Preference alignment measures whether responses match user preferences. AlpsBench (Xiao et al., 2026, arXiv:2603.26680) evaluates whether explicit memory mechanisms improve preference-aligned and emotionally resonant responses: after ingesting a user profile, the model is asked conversational questions (preferences, emotional support) and responses are scored on preference alignment and emotional resonance rubrics, not on predictive accuracy. Their central finding, that recall improvement does not automatically carry into preference alignment, is arrived at independently and is complementary to this paper. Both papers point at the same gap from different sides: solving for recall is insufficient for what memory is ultimately for. Preference alignment is an outcome property (whether a response matches what the user prefers). Representational accuracy is an upstream property (whether the AI's internal model of the user is correct). Preference alignment is one downstream consequence of representational accuracy being correct; it is not the same property.

We propose behavioral prediction on held-out reasoning situations as a test of a fifth target: representational accuracy.

Prediction is the test, not the goal. We do not pursue prediction accuracy as an end in itself. The target is representational accuracy, the fidelity of an AI's internal model of a specific person, and behavioral prediction on unseen situations is the instrument we use to measure it. A prediction score tells us the representation captured something that generalizes to new situations; a low score tells us it did not. Prediction is a diagnostic; the Behavioral Specification is what this paper is testing.

The held-out design rests on a stability premise. A person's interpretive patterns must be stable enough within their own corpus that what is captured from one half references what appears in the other. Without that, held-out behavioral prediction is impossible in principle, regardless of how good the representation is. The 14 main-study subjects have coherent autobiographical narratives consistent with the premise; §4.1 reports that the Behavioral Specification authored from training text generalizes to held-out text at above-baseline rates. The constraint matters: subjects whose reasoning shifts substantially across their corpus (across a major career change, a profound life event, or a decades-long corpus with distinct epochs) may not be well-represented by a single snapshot specification, which is one reason temporality is a flagged follow-up in §7. We state the premise explicitly so that what the held-out test can and cannot diagnose is clear.

The missing axis is representational accuracy itself. Each existing benchmark family measures a real property of memory systems, and each is useful for its own target. What is missing is an axis that measures how accurately the memory system represents the person whose behavior it is meant to anticipate. This paper's approach is a prototype answer on that axis, not a finished benchmark. §7 flags a differentiated rubric (one that separates interpretation-heavy from literal-recall questions, and scores epistemic honesty as its own dimension) as the priority follow-up for turning this prototype into a standardized benchmark.

A single number does not capture a memory system's full capability. Recall, survey-response prediction, persona fidelity, preference alignment, and representational accuracy are distinct axes. A system that saturates one may do nothing on another. Production-grade evaluation of memory systems should report results on multiple axes rather than on any single one.

2.2 Memory systems for LLM agents#

The four commercial memory systems we evaluate (Mem0, Letta, Supermemory, Zep) have converged on a shared set of capabilities: semantic retrieval over embedded content, source attribution, multi-level memory structures, and benchmark-validated recall performance. They differ in how each of these is architected. None positions representational accuracy or behavioral prediction of a specific individual as a design target.

Table 2.1. Memory system comparison. Verified against primary sources.

| Provider | Core architecture | Retrieval method | Memory types | Published recall score |

|---|---|---|---|---|

| Mem0 | Extract → consolidate → retrieve pipeline; Mem0g graph variant adds a directed labeled knowledge graph alongside the vector store | Hybrid: semantic + keyword + entity | Conversation, session, user, organizational | 91.6 LOCOMO, 93.4 LongMemEval (current algorithm)13 |

| Letta / MemGPT | LLM-as-operating-system; virtual context management with main context plus external context | Archival via archival_memory_search; main-context memory blocks self-edited via core_memory_append, core_memory_replace |

persona and human blocks in main context; archival and recall memory external |

74.0% on LOCOMO with GPT-4o-mini14 |

| Supermemory | Five-component architecture: chunk-based ingestion, relational versioning, temporal grounding, hybrid search, session-based ingestion | Hybrid with reranking and query rewriting; source chunks injected at retrieval | Contextual memories, relational versions, session data | 81.6% / 84.6% / 85.2% on LongMemEval_s with GPT-4o / GPT-5 / Gemini-3-Pro (self-reported) |

| Zep | Built on Graphiti (Apache 2.0, open source). Bi-temporal knowledge graph | Hybrid: semantic + BM25 + graph traversal | Episodes (ground-truth source), Entities, Facts-as-triplets with temporal validity windows | 71.2% on LongMemEval with GPT-4o15 |

All four systems report recall scores in the 70-93% range; on the standard recall benchmarks, recall is approaching solved.16 All four are sophisticated systems that solve real problems in memory management. They optimize for storing, organizing, and retrieving what a person said or did. None of them takes representational accuracy, the property of interest to this paper, as an explicit design target.

Of the four systems, Letta (Packer et al., 2023, arXiv:2310.08560) is architecturally distinct: it is the only one whose core architecture treats memory as something an agent synthesizes during conversation rather than stores for later retrieval.17 This stateful-agent design is examined separately as a post-hoc case study in §4.5 (full case study in Appendix G), distinct from the archival-retrieval path Letta exposes for the main-study conditions. The Behavioral Specification targets the interpretive layer that sits above retrieval, which three of the four (Mem0, Supermemory, Zep) do not model at all, and which the fourth (Letta) models implicitly through agent-initiated memory editing that our main-study configuration did not exercise (see §4.3 and §4.5).

2.3 Traceability and reasoning traces#

Traceability operates at two levels. Fact-level traceability answers where a retrieved claim came from. Reasoning-level traceability answers why the system believes this about this person. The four memory systems we evaluate provide the first; representing how a person reasons requires the second. This difference is load-bearing for the paper: representational accuracy operationalizes interpretation, and interpretation cannot be verified at the fact level alone. A system that represents how a person reasons must be auditable by that person, or the representation is a black box they cannot verify.

Zep has the strongest explicit fact-level provenance of the four: every entity and relationship traces back to the episode IDs that produced it. Supermemory returns source chunks alongside retrieved memories. Mem0 tracks ingestion provenance through timestamps. Letta exposes agent state and memory-block edit history rather than fact-level provenance.

The Behavioral Specification is structured so that every claim is a piece of reasoning, not just a piece of content. An axiom (for example, "A1: Dual-ledger authority") is an assertion about how the person reasons in a domain, grounded in the facts that imply it (F-001, F-047), which are themselves grounded in the exact source passages that produced those facts. Walking this chain backward gives the user a reasoning trace: not only where a belief originated, but what line of reasoning connects the source text to the interpretive claim.

Worked example. Sunity Devee, Question 4 (battery item, drawn from a held-out passage about her husband, the Maharajah, expressing disappointment over a missed opportunity). The structure below shows how a single response in the C2a condition (specification only: the model is given the Behavioral Specification and the question, with no retrieved facts and no source corpus) decomposes into a reasoning trace.

Subject: Sunity Devee

Question: "When her husband expresses disappointment about missing an opportunity due to her concerns, does the narrator typically reconsider her position?"

Held-out ground truth (full passage): "The Maharajah was disappointed, as he was anxious to go and see the old castle, and to shoot, and I deprived him of both these pleasures."

Response (C2a, excerpt): "Based on the behavioral specification, the answer is no, not typically, and not in the way the question assumes. A2 (Spiritual Integrity Over Social Cost)^A2 and A5 (Relational Identity)^A5 create the relevant dynamic. A5 activates first: relational identity means his disappointment matters deeply. A2 holds firm: arguments from social consequence, including spousal disappointment, will not register as reasons on their own. Rather than reconsider the position itself, she is more likely to absorb the tension without resolving it (P3 — Tension Absorbed, Not Expressed)^P3 and reframe the disappointment within a spiritual logic (A1 — Divine Primacy)^A1, while grieving the relational cost without reversing the decision."

Reasoning trace. The response invokes four Spec items in sequence:

- A5 (Relational Identity) registers his disappointment as real, not dismissible.

- A2 (Spiritual Integrity Over Social Cost) refuses social consequence as a reason to reconsider.

- P3 (Tension Absorbed, Not Expressed) holds the conflict without resolving it in either direction.

- A1 (Divine Primacy) reframes the disappointment within a spiritual logic.

Each Spec item grounds out in extracted facts that ground out in verbatim source passages. The user can walk the chain in either direction: from a phrase in the response, into the Spec item that licensed it; from the Spec item, into the facts that imply it; from the facts, into the source passages that produced them.

Referenced behavioral Spec items (from

data/global_subjects/sunity_devee/anchors_v4.mdandpredictions_v4.md):

- A1 — Divine Primacy. Outcomes are interpreted within a providential logic; the spiritual frame is the master frame.

- A2 — Spiritual Integrity Over Social Cost. Conscience and principle outrank social consequence as reasons.

- A5 — Relational Identity. Identity is constituted through relationships rather than autonomous selfhood; relational cost is real, not dismissible.

- P3 — Tension Absorbed, Not Expressed. Conflicts between principle and relationship are held in place rather than collapsed in either direction.

Related facts (from

facts.json, each carrying its verbatim source-passage excerpt):

- F-73: "Sunity Devee's mother would never countenance anything her conscience told her was wrong." (grounds A2)

- F-414: "Sunity Devee's father believed he acted as a public man guided by conscience and divine duty in accepting the marriage proposal." (corroborates A2 from a different relational direction; conscience-as-master-frame pattern reinforced across both parents)

- Additional facts grounding A1, A5, and P3 are referenced in the specification's anchor and prediction files at

data/global_subjects/sunity_devee/spec/anchors.mdanddata/global_subjects/sunity_devee/spec/predictions.md; per-fact source-passage excerpts are in the same subject'sfacts.json.

The user can audit any step: read the response, look up each cited anchor or prediction by name, look up the facts that ground it, and read the source passages those facts came from. If a fact misrepresents the source, correcting it propagates through the Spec on recomposition.

This matters because a person should be able to inspect the system's model of them, challenge any step in the reasoning, and correct it if it is wrong. A fact-attribution memory system lets the person audit what the system stores. A reasoning-trace specification lets the person audit what the system believes. The first is a feature. The second is the minimum bar for a representation that acts on someone's behalf.

2.4 Cognitive and representational foundations#

Six prior research directions shaped how we designed this paper's test. Each motivates a specific choice about what to measure, what to compare against, or what failure mode to expect.

Bartlett (1932) established that human memory is reconstructive and schema-driven rather than literal playback. Reconstruction follows the organizing structures a person has built up over time, not a record of the original event. The Behavioral Specification is computationally analogous: a structured compression meant to carry the signal of a person's reasoning without storing every fact about them. We designed the specification with a schema-like architecture (anchors, core, predictions) precisely so we could test whether it does the work a human schema does: enable accurate anticipation of behavior in situations never encountered in the source data. Our 50/50 train/held-out split is the experimental realization of this question.

Hinton et al. (2015) showed that compressing a large neural network into a smaller one preserves "dark knowledge," the relationships between outputs that carry more information than the outputs themselves. This result motivates one of our central experimental comparisons: on matched token budgets, does a compressed interpretive artifact carry more predictive signal than the raw content it was derived from? The Hamerton condition in §4.2 (4,500-token Spec vs. 33,000-token training corpus at 2.63 vs. 2.27 on the 5-judge primary panel) is a direct test of that question in the personal-representation setting.

Chen et al. (2025) (Chen, Arditi, Sleight, Evans, Lindsey; arXiv:2507.21509) show that the character a model takes on (its "persona") is encoded in specific directions inside the model's internal numeric state — persona vectors — and that those directions can be identified, monitored, and nudged to shift the model's behavior in predictable ways. Their approach modifies the model; ours informs the model from outside via context. Both validate that persona is a real, manipulable structure: one reachable through the model's internals, the other through context. We chose the context route because it produces a portable artifact users can own and audit, which internal activation steering does not. This choice shows up in the experiment as using a static response model (Haiku) served a variable context, rather than a fine-tuned or activation-steered model.

Jiang et al. (COLM 2025, arXiv:2504.14225) find that frontier models achieve only ~50% accuracy on dynamic user profiling tasks even with full conversation access. The paper documents the failure empirically; our reading is that the cause is the gap between having facts and having the interpretive structure to apply them to new situations. Jiang's paper is the most direct existing evidence for the gap this paper studies, and our test design inherits from it: behavioral prediction on scenarios drawn from held-out text that the model has not seen, with all relevant facts retrievable, measures exactly the interpretive-application gap.

Jain et al. (2025, arXiv:2509.12517) find that adding conversation context to LLMs makes them more sycophantic: more likely to agree with the user even when the user is wrong (+45% on Gemini 2.5 Pro) and more likely to adopt the user's perspective on a question. Their result shows that context without the right structure pushes the model toward what the user appears to want rather than toward a grounded answer. This is why our experiment includes a wrong-Spec control (§1.3 Mechanism): we hand the model a Behavioral Specification that does not match the actual subject. If models drifted purely toward whatever context they are given, the wrong-Spec should behave like any other structured prompt. Instead, the model either flags the mismatch explicitly (60.6% of responses) or attempts a low-quality application, neither of which is sycophantic drift. Jain's finding plus our wrong-Spec result bracket the question from both sides: context shape matters (Jain), and content matters too (our wrong-Spec result, §4.3).

Lu et al. (2026, arXiv:2601.10387) identify what they call the Assistant Axis: a dominant internal direction that anchors assistant models' default behavior toward generic helpfulness and harmlessness. This default operates even when no specific user is involved. The Behavioral Specification can be read as an external override to the Assistant Axis on a per-user basis: a structured anchor that shifts the model from "generic helpful assistant" toward "reasons as this specific person would reason." This framing motivated our choice to measure hedging as a primary outcome alongside accuracy: if the Spec shifts the model off the generic Assistant Axis, the behavioral change should show up both in what the model predicts and in what it is willing to commit to. Our hedging-reduction finding (§1.3 Mechanism, §4.3) is consistent with this reading.

3. Study Design#

The experimental strategy holds the response model constant and varies what is served as context. Every condition in the study is a different choice about what that context contains: nothing (pretraining only), retrieved facts, raw corpus, a Behavioral Specification, or combinations of those. This isolates the contribution of the interpretive layer itself from model capability, provider, or fine-tuning regime. Each measurement choice ties back to a specific number reported in §4, and the statistical commitments were pre-locked before final analysis.

The apparatus is described in seven parts: §3.1 establishes the property being measured; §3.2 specifies the experimental conditions; §3.3 defines the scoring rubric and the calibrated LLM judge panel; §3.4 introduces the subjects; §3.5 covers the question batteries and circularity controls; §3.6 names the response models; §3.7 describes the pipeline that produces the Behavioral Specification (the interpretive-layer artifact tested in this study).

3.1 Operationalizing representational accuracy#

Section 1.1 introduced representational accuracy as the AI-side property of interest. This section defines the term precisely so the rest of the methodology can refer to it. We use the term representational accuracy to describe how faithfully a model can act in line with a specific person when given a Behavioral Specification of that person. The instrument we use to measure this property is behavioral prediction on held-out situations. Prediction here is the test, not the goal: §2.1 develops this distinction. The property is a joint claim across three components:

- The person has behavioral patterns consistent enough to be captured in a Behavioral Specification.

- The Behavioral Specification actually carries that signal.

- A model given the Behavioral Specification can act on it.

Prediction on held-out situations is how we test all three at once.

The test works like this: held-out passages from the subject's autobiography serve as samples of situations the model has not seen. If the subject's behavior is consistent enough to be captured and the Behavioral Specification actually captures it, the model should anticipate how the subject would respond in those held-out cases. When it does not, one of three things is failing: the behavioral patterns are not consistent, the Behavioral Specification is wrong, or the model is not using the Behavioral Specification well. Each failure mode is informative.

We do not claim to modify the model's internal parameters. Each condition in §3.2 varies what is served to the model at inference time: nothing, retrieved facts, the full extracted fact set, the raw source corpus, a Behavioral Specification, or combinations of these. The model's resulting prediction is what we score against the verbatim held-out passage. The no-context condition isolates the model's pretrained representation of the subject. The fact and corpus conditions isolate what the model can infer from raw information at runtime. The Behavioral Specification isolates what a structured interpretive layer adds on top. The study reports each.

In practice, we record representational accuracy as the mean predicted-behavior score (1-5 scale) across a standardized battery of 39 behavioral prediction questions, averaged across the five primary judges from two providers (Haiku 4.5, Sonnet 4.6, Opus 4.6, GPT-4o, GPT-5.4). Two Gemini judges (2.5 Flash and 2.5 Pro) are reported as a sensitivity check. The rubric is in §3.3; the guide to interpreting fractional scores and anchor crossings is in §3.3.1.

3.2 Experimental conditions#

Each condition is a specific combination of inputs served to the response model (§3.6) against the same behavioral battery (§3.5). Every condition is run on all 14 subjects (§3.4). The Behavioral Specification and the extracted fact set used across the conditions below are produced by the pipeline in §3.7. The conditions separate into two groups, summarized in the table below and broken out in detail after.

All conditions, by group.

| Group | ID | Condition | Inputs served |

|---|---|---|---|

| Direct context manipulations | C5 | Baseline | No context beyond the question |

| C2a | Spec only | The Behavioral Specification | |

| C2c | Wrong Spec | A different subject's Spec | |

| C4 | All facts | The full extracted fact set | |

| C4a | Facts + Spec | Full facts plus the Spec | |

| C8 | Raw corpus | Full training corpus (half the source text) | |

| C9 | Raw corpus + Spec | Training corpus plus the Spec | |

| Memory-system configurations (controlled, all 5 systems) | C1 | Retrieval only | Top-k facts (shared fact pool) |

| C3 | Retrieval + Spec | Top-k facts + Spec (shared fact pool) | |

| Memory-system configurations (native, 4 commercial systems) | C1 native | Retrieval only | System's own ingestion |

| C3 native | Retrieval + Spec | System's own ingestion + Spec |

Direct context manipulations. We specify the model's input directly: no context (baseline), the Behavioral Specification, the extracted fact set, the raw corpus, or combinations of these. No retrieval step intervenes. Each condition isolates what one input type or combination contributes.

| ID | Condition | Inputs served | Null / comparison |

|---|---|---|---|

| C5 | Baseline | Nothing beyond the question | Pretraining-only floor |

| C2a | Spec only | The Behavioral Specification | Isolates the Spec's contribution |

| C2c | Wrong Spec18 | A random other subject's Spec | Tests whether structured interpretive content, not the correct content, produces the effect |

| C4 | All facts | The full extracted fact set for the subject | Tests whether raw information volume substitutes for structure |

| C4a | Facts + Spec | Full facts plus the Spec | Tests whether the Spec adds value on top of raw facts |

| C8 | Raw corpus | Full training corpus (half the source text) | Tests whether uncompressed source text substitutes for structure |

| C9 | Raw corpus + Spec | Training corpus plus the Spec | Tests whether the Spec adds value on top of raw source19 |

Memory-system configurations. Retrieval is performed by a memory-system provider's production deployment. The memory-system conditions run in two modes: a controlled mode (each system retrieves from an identical pre-extracted fact set) and a native mode (each system ingests the raw corpus through its own pipeline).

Five memory systems are evaluated: Mem0, Letta20, Supermemory, and Zep, plus Base Layer as our own open-source reference implementation (pipeline detail in §3.7). Architectural detail for the four commercial systems is in §2.2 Table 2.1.

Controlled configuration (all 5 systems). Each system retrieves from an identical pre-extracted fact set, isolating retrieval-algorithm differences from ingestion-pipeline differences.

| ID | Configuration | Inputs served |

|---|---|---|

| C1 | Retrieval only | Top-k facts returned by the system for the question (controlled fact pool) |

| C3 | Retrieval + Spec | Top-k retrieval output plus the Behavioral Specification (controlled fact pool) |

Native configuration (4 commercial systems). Each system ingests the raw training corpus through its own pipeline, reflecting real-world deployment. C1 (retrieval only) and C3 (retrieval + Spec) are run with the system's own ingestion pipeline replacing the controlled fact pool.

| System | Conditions | Native ingestion in plain terms |

|---|---|---|

| Mem0 | C1, C3 (native) | Mem0 reads the corpus and decides on its own how to break it up, extract facts, and retrieve them. |

| Letta archival | C1, C3 (native) | The corpus is loaded into Letta's archival memory store; retrievals happen at query time from that store. |

| Supermemory | C1, C3 (native) | Supermemory chunks the corpus on its own and applies reranking to the retrieved chunks at query time. |

| Zep | C1, C3 (native) | Zep ingests the corpus into a knowledge graph (via Graphiti) and retrieves using a hybrid of semantic similarity, keyword match, and graph traversal. |

Both configurations are reported so retrieval-quality differences and ingestion-pipeline differences can be read separately. Base Layer is run in the controlled configuration only: its retrieval uses the same fact set that feeds the Spec pipeline.

Detailed per-condition parameters, exclusion cases, and ingestion specifics are in Appendix C.21

3.3 Scoring rubric with calibrated LLM judge panel#

Every response is scored 1-5 by an LLM judge panel against the verbatim held-out ground-truth passage. The primary aggregate uses five judges; two additional judges contribute to the sensitivity check (§3.3.2). Human annotation at this scale is feasible (~14 subjects × 40 questions × 15+ conditions) but was not done; running more conditions and more judges instead was the central evaluation trade-off. LLM-as-judge is grounded in prior work showing strong correlation with human raters and reliability gains from panel aggregation (Zheng et al. 2023; Verga et al. 2024). Extending the panel with human annotators on a stratified subset is flagged as the priority measurement follow-up in §7.1.

The evaluation is deliberately recursive. Response models are evaluated by judges (§3.3.2). Judges are evaluated by calibration diagnostics (§3.3.3), inter-judge agreement metrics (§3.3.4), and post-hoc rubric-handling audits (§3.3.6). No single layer is treated as ground truth; each layer's behavior is itself measured and disclosed, and where a layer's behavior diverges from what the rubric intends, the divergence is flagged rather than corrected silently. The paper's rigor in the absence of human annotation comes from this stacked-instrument structure, not from trusting any one step.

Scoring rubric. The following is the verbatim prompt the judges executed (canonical for both the 5-judge primary panel and the 7-judge sensitivity panel; source: scripts/judge_hamerton_5judge.py):

You are evaluating whether a response about a person PREDICTED what actually happened.

=== HELD-OUT GROUND TRUTH ===

{held_out}

=== RESPONSE ===

{response_text[:1500]}

Rate 1-5:

5=Predicts specific outcome

4=General direction correct

3=Right domain wrong outcome

2=Wrong prediction

1=Refuses or off-base

Respond with ONLY a single digit (1-5).

| Score | Anchor (verbatim) |

|---|---|

| 1 | Refuses or off-base |

| 2 | Wrong prediction |

| 3 | Right domain wrong outcome |

| 4 | General direction correct |

| 5 | Predicts specific outcome |

The prompt is deliberately spare. It frames the task in one sentence, shows the held-out passage and the response, and asks for a single digit against five anchor labels. There are no calibration examples in the prompt, no scoring guide, and no chain-of-thought scaffolding. The anchors themselves are directive (refuses or off-base, wrong prediction, right domain wrong outcome, general direction correct, predicts specific outcome); resolution at the boundaries between adjacent anchors is left to the judge model. The design choice is to test whether frontier models converge on the behavioral-prediction construct from minimal instruction. §3.3.4 reports the inter-judge agreement obtained under this design.

Each response is scored against the verbatim held-out passage from which the question is drawn; the score reflects how closely the response predicts the documented behavior. Battery composition is detailed in §3.5. Condition identifiers (C5, C2a, C4a, C3) refer to the conditions defined in §3.2 and summarized in Appendix C; rubric anchor numbers 1 through 5 refer to the verbatim anchors above. Score interpretation against the construct (the cross-anchor rule, what each anchor crossing means in practice, multi-anchor jumps, and within-anchor shifts) is developed in §3.3.1 with worked examples. A worked rubric example alongside the no-context baseline engagement analysis is in §4.1.1; full per-subject score distributions with verbatim responses are in Appendix D.

3.3.1 Score interpretation#

Scores are read at three related granularities: integer rubric anchors (1 through 5), fractional means produced by averaging across the 5-judge primary panel (e.g., 2.87, 3.12, 2.34), and crossings between integer anchors when conditions change. Fractional shifts should be read through the integer anchors, because each anchor corresponds to a categorical shift in response quality.

The cross-anchor interpretation rule. A fractional delta that crosses an integer anchor reflects a real shift in the underlying response distribution. A delta that stays inside a single anchor is a within-category shift and a weaker claim.

The verbatim anchors describe stages of prediction quality. The paper reads each crossing as a categorical shift in the construct the rubric is designed to measure: alignment of the response with the held-out behavioral pattern.

| Boundary crossed | Verbatim anchor shift | Construct reading |

|---|---|---|

| 1 / 2 | Refuses → wrong prediction | The model moves from declining to predict to engaging with the question, even when off. |

| 2 / 3 | Wrong prediction → right domain wrong outcome | The answer becomes specifically about this subject's domain rather than a generic stand-in. |

| 3 / 4 | Right domain → general direction correct | Multiple behavioral dimensions of the subject appear together in the same answer. |

| 4 / 5 | General direction → specific outcome | The response closely matches the behavioral pattern in the held-out passage. |

The boundary crossings are illustrated through worked examples in §4.1 (Examples A, B, C) and §4.1.1, each of which is mechanically sourced from the canonical (subject, qid, condition) cells used to compute the §4 aggregate. Example A in §4.1 illustrates a 1 → 4 crossing (wrong-referent correction); Example B illustrates a 2 → 5 crossing (directional correction); Example C illustrates a 2.80 → 5 crossing (abstention to substantive inference). Each example shows the verbatim question, held-out, and response excerpts paired with the per-cell cached score.22

What a 1 means and does not mean. A score of 1 reflects a baseline failure to produce a usable prediction about the named subject: the response either explicitly declined to predict (abstention) or engaged with the question but landed on a categorically incorrect answer (non-abstention misalignment, including wrong referent, off-base inference, or confusion with a different subject). It is not a claim that the response was non-fluent or empty, and it is not a claim that the model lacks any related knowledge; the score reflects only that the response failed the held-out comparison. Each question tests one behavioral sample at a time; the aggregate fraction of score-1 responses across roughly 40 questions per subject is what the paper reads as the per-subject baseline-failure rate. The composition of score-1 responses (explicit abstention vs non-abstention misalignment) is decomposed in §4.1.1.

What a 5 means and does not mean. A score of 5 reflects alignment with one specific behavioral sample: the held-out ground-truth passage the question is drawn from. It is not a claim that the response fully represents the subject in some absolute sense, and it is not a claim that the same response would score 5 on a different held-out passage from the same subject. Each question tests one behavioral sample at a time; the aggregate across roughly 40 questions per subject is what the paper reads as the subject-level score.

Multi-anchor crossings: the strongest categorical signal the rubric detects. A multi-anchor crossing is a single question whose 5-judge primary mean shifts across two or more integer rubric bands when the condition changes. Crossings can span two bands (e.g., 1 → 3, 2 → 4) or, more rarely, three (e.g., 1 → 4, 2 → 5). Larger crossings indicate larger categorical jumps in the same response, with five independent judges converging on the move. §4.2 reports the rates of these crossings and the response-level phenomena that produce them; worked examples are in §4.1.1 and Appendix E.

The paper applies the cross-anchor rule consistently. Score deltas reported in §4 are read through this lens. A +0.50 delta that crosses a rubric anchor is treated as a stronger claim than a +0.50 delta that does not.23

Reading scores within integer anchors. The 5-judge primary panel detects within-anchor signals cleanly.24 Across the 18 condition pairs analyzed,25 roughly 18% of paired questions show same-anchor fractional shifts of at least 0.5 rubric points (a within-category shift, weaker than a cross-anchor crossing per the rule above). The integer metric is used throughout §4 for cross-anchor categorical interpretation; the within-anchor signal is reported here as methodological transparency.

3.3.2 Judge panel#

Seven judges from three providers give the numeric aggregate its weight. Zheng et al. (2023) established that a single strong LLM judge correlates with human judges on comparable tasks at rates similar to human-human agreement. Subsequent panel-based work (Verga et al. 2024 and follow-ons) showed that aggregating multiple LLM judges past a small panel size further tightens agreement and reduces single-model idiosyncrasy. Seven judges across three providers is well past that threshold.

| Judge | Provider |

|---|---|

| Claude Haiku 4.5 | Anthropic |

| Claude Sonnet 4.6 | Anthropic |

| Claude Opus 4.6 | Anthropic |

| GPT-4o | OpenAI |

| GPT-5.4 | OpenAI |

| Gemini 2.5 Flash | |

| Gemini 2.5 Pro |

The verbatim judge prompt is shown in §3.3 and is canonical for both the 5-judge primary panel and the 7-judge sensitivity panel. Each judge receives the held-out ground-truth passage, the subject context (name, source), the prediction question, and the response to score, not the condition label or the response-generating model. Judges do not see other judges' scores. Response generation is similarly blinded: the response model receives only the question plus the condition-specific context block, with no signal that distinguishes which experimental condition it is operating under (the prompt schema in §3.6 is identical across conditions; only the injected context block changes).

The specification-effect claim. When a Behavioral Specification is served to the model as context, the model's responses shift in the direction of the subject's demonstrated behavioral patterns, and that shift registers as a measured increase in representational accuracy against held-out passages from the same subject. This is the directional claim the panel is built to test; not a claim that the model has gained a new behavioral-prediction capability, and not a claim that the higher-scoring response is the absolute "correct" answer for the subject.

The panel is designed for directionality, not absolute precision. §3.3.3 (calibration) and §3.3.4 (inter-judge agreement) test how consistently the panel detects the directional shift.

3.3.3 Calibration#

The calibration diagnostic measures whether each judge applies the rubric anchors as the rubric defines them on synthetic inputs with known correct scores. It does not use any subject's responses. The four inputs are constructed examples: verbatim ground truth, paraphrased ground truth, partial ground truth (first sentence only), and ground truth plus generic padding.

All seven judges were tested against this diagnostic. Five (Haiku, GPT-4o, GPT-5.4, Gemini Flash, Gemini Pro) were tested before the main-study response-scoring run. Sonnet and Opus were tested on the same diagnostic inputs three days after the main-study run completed; they were added to the primary panel at panel-design time on cross-Anthropic-coverage grounds, and the post-hoc diagnostic confirms that composition rather than driving it. Full panel composition with per-judge calibration-status flags is in Appendix C.5.

Diagnostic tests.

| Test | Input | Expected | What it measures |

|---|---|---|---|

| Verbatim | Response = ground truth | 5.0 | Recognizes perfect match |

| Paraphrased | Correct content, different wording | ~5.0 | Penalizes paraphrase? |

| Short correct | First sentence of ground truth | <5.0 | Partial content scored partial? |

| Long correct | Ground truth + generic padding | 5.0 | Length inflates scores? |

Results.

| Test | Haiku | Sonnet | Opus | GPT-4o | GPT-5.4 | Gemini Flash | Gemini Pro |

|---|---|---|---|---|---|---|---|

| Verbatim | 5.00 | 5.00 | 5.00 | 5.00 | 5.00 | 5.00 | 4.15 |

| Paraphrased | 4.75 | 5.00 | 5.00 | 5.00 | 5.00 | 4.70 | 3.55 |

| Short correct | 3.80 | 4.35 | 4.20 | 4.05 | 4.20 | 3.85 | 2.85 |

| Long correct | 5.00 | 5.00 | 5.00 | 3.35 | 4.80 | 3.80 | 1.20 |

Six of the seven judges score verbatim matches at 5.0; Gemini Pro is the outlier at 4.15. Sonnet and Opus cluster with Haiku, GPT-4o, and GPT-5.4 at the cleanest end of the panel: no verbatim miss, no paraphrase penalty, no length-padding penalty, expected dip on partial content (4.20 to 4.35). Length sensitivity varies sharply across the rest of the panel: Haiku, Sonnet, and Opus do not penalize padding; Gemini Pro penalizes it severely (5.0 to 1.20). Per-judge calibration data and full panel composition are in Appendix C.5; raw scoring data at results/judge_calibration/.

Use of calibration data. Scores are not normalized. Any normalization requires deciding which judge's profile is "correct" and re-scaling the others toward it. Calibration data is published in its raw form so readers can apply their own normalization if they prefer. Length sensitivity is treated as a first-class calibration dimension: judges that fail the long-correct diagnostic by ≥1 rubric point are excluded from the primary aggregate and reported in the 7-judge sensitivity panel only.

Primary aggregate: 5-judge panel. The primary numeric aggregate reported throughout §4 is the 5-judge mean using Haiku 4.5, Sonnet 4.6, Opus 4.6, GPT-4o, and GPT-5.4. All five sit at the calibrated end of the panel: each scores verbatim and paraphrased matches at or near 5.0 with low length sensitivity. This is the panel the §3.3.5 aggregation rule operates on, and the panel inter-judge agreement is reported for in §3.3.4.

Sensitivity aggregate: 7-judge panel. Both Gemini judges are reported separately as a 7-judge sensitivity check rather than rolled into the primary. Gemini Pro fails the verbatim-match diagnostic (4.15 where every other tested judge scores 5.00) and penalizes padded-correct responses severely (5.00 on short correct dropping to 1.20 on long correct). Gemini Flash passes verbatim cleanly but shows consistent length sensitivity (5.00 verbatim dropping to 3.80 on long correct). On actual study responses, both Gemini judges show a systematic +1-point magnitude inflation relative to the five primary judges. The combination of Pro's verbatim failure, Flash's length sensitivity, and the shared inflation pattern places both Gemini judges in the sensitivity aggregate rather than the primary, while preserving them as a cross-provider robustness check in §4.6.2.

The 5-judge primary is also the conservative choice: including the Gemini judges produces larger Spec-effect deltas, not smaller ones (full numbers in §4.6.2). Reporting on the primary aggregate is therefore the lower-bound estimate.26

3.3.4 Inter-judge agreement#

The judge panel is designed to detect the directional shift named by the specification-effect claim (§3.3.2). Two complementary agreement measures answer different questions about whether judges detect that shift consistently: direction (pairwise Spearman ρ) and absolute magnitude (Krippendorff α).

Direction agreement: pairwise Spearman ρ. Spearman ρ measures whether two judges rank the same set of items in the same order. ρ = 1 is perfect ranking agreement; ρ = 0 is no rank agreement; ρ ≥ 0.8 is conventionally treated as strong rank agreement.

For each pair of judges in the 5-judge primary panel (10 pairs across Haiku, Sonnet, Opus, GPT-4o, GPT-5.4), pairwise Spearman ρ ranges from 0.86 to 0.93.27 The five primary judges agree on the ranking of conditions: whatever any individual judge's absolute calibration quirks, they converge on which conditions produce better responses. For the directional claim (does the specification produce a measurable, consistent shift toward the held-out behavioral pattern), this is the statistic that matters.

Magnitude agreement: Krippendorff α (ordinal). Krippendorff α measures whether judges give the same response the same numeric score (not just whether they rank items in the same order). α = 1 is perfect agreement; α = 0 is no better than chance; α < 0 is systematic disagreement. Krippendorff's guidance cites α ≥ 0.8 as high reliability and α ≥ 0.667 as substantial reliability.

The 5-judge primary panel scores α = 0.659, just below the substantial-reliability threshold (α ≥ 0.667), placing the panel in the tentative-conclusions band. Combined with Spearman ρ of 0.86 to 0.93 above, this is the empirical signature of the design choice: directional rankings converge across judges while absolute magnitudes diverge.

The 7-judge panel including the Gemini judges drops to α = 0.535. This drop reflects the systematic +1-point Gemini inflation: Gemini judges score responses about one point higher on average than the five primary judges, so absolute values disagree even when rankings match. This is why the calibration audit (§3.3.3) excluded the Gemini judges from the primary aggregate.

The α value places a ceiling on how precisely any individual fractional score should be read, which is why the paper treats per-subject deltas that stay inside a single rubric band as weaker than deltas that cross one.

The agreement is achieved on a deliberately spare rubric. The judge prompt in §3.3 is five anchor labels and a one-sentence task framing. No calibration examples, no scoring guide, no chain-of-thought. Five frontier judges across three providers converge to ρ = 0.86 to 0.93 on rank order and α = 0.659 on absolute magnitude under this scaffolding. The anchors are directive but their boundaries are left to the judge to resolve, and the resolutions converge across providers. The agreement is not the product of an elaborate rubric forcing judges into the same scoring frame; it is independent provider models agreeing on what the task is asking from minimal instructions.

Why directional inference survives middle-anchor noise. Anchors 2 through 4 of the rubric form a graded sequence (generic → partially specific → substantively specific) where adjacent-anchor disagreements between judges are expected; the calibration data confirms this is where judges most often diverge on absolute placement. This noise is symmetric across conditions: the same fuzzy 2/3 boundary applies to no-context, Spec, and facts+Spec responses on the same question. Symmetric within-anchor noise preserves directional inference. It does not move scores systematically up or down across conditions, so condition-to-condition deltas (the §4.1 gradient, the sign of every low-baseline subject's lift, the Wilcoxon test on Δ_C4a) survive even substantial noise on individual scores. The within-anchor noise places a ceiling on absolute-magnitude precision; it does not weaken the directional claim.

The panel does not establish that any higher-scoring response is the absolute correct answer for the subject; that determination requires human annotation against the subject's actual writing, which we do not have. What the panel provides is cross-provider directional convergence: three independent providers' models agree that the specification is moving responses in the same direction. We treat that as sufficient for a directional claim, no stronger.

Raw agreement matrices are at results/interjudge_agreement/.28

3.3.5 Aggregation and statistical analysis plan#

Aggregation rule. The aggregation rule decides how individual judge scores are combined into a single number for each subject under each condition. The rule was locked before any results were computed.29

For each subject under each condition, every judge produces one score per question. For each judge, those scores are averaged across questions, producing one number per (subject, condition). The five primary judges' numbers (Haiku, Sonnet, Opus, GPT-4o, GPT-5.4) are then averaged to get one number per (subject, condition). A 7-judge average that adds Gemini Flash and Gemini Pro is computed in parallel and reported as a sensitivity check. Subjects, not questions, are the level at which the paper draws conclusions.30

Primary outcome. The per-subject score under each condition. The primary cross-subject comparison is Δ_C4a: each subject's score with the Spec (the facts-plus-Spec condition, C4a) minus their score without the Spec (the no-context baseline, C5).

Primary test. A Wilcoxon signed-rank test paired across the 14 main-study subjects.31 The test asks whether the per-subject Δ_C4a values are reliably above zero. It is run on the 5-judge primary aggregate; the 7-judge aggregate is reported alongside as a robustness check.

Sample size. N = 14 main-study subjects, pre-registered (not adjusted post-hoc).

Multiple comparisons. Only one statistical test is treated as the primary claim (the Wilcoxon test above). All other analyses in §4 are descriptive: they report patterns and direction without making additional inferential claims.32

Pre-registered vs post-hoc analyses. Every primary analysis (panel composition, aggregation rule, Wilcoxon test, Tier 2 replication, wrong-Spec v2 derangement) was pre-registered in docs/ANALYSIS_PLAN_LOCK.md before any scoring was run. Statistical-rigor checks and post-hoc validity audits were added later in response to peer review and are reported as exploratory checks alongside the pre-registered confirmatory test.33

Effect-size grain. The cross-anchor interpretation rule (§3.3.1) sets the substantive interpretation grain. The per-question anchor-crossing rate (§4.1, §4.2.1) is the secondary descriptive statistic alongside the mean Δ.

3.3.6 Rubric-handling limitations (post-hoc validity audit)#

The validity audit reported below applies to the verbatim prompt published in §3.3.

A post-hoc validity audit, conducted after the analysis-plan lock, identified two rubric-handling limitations any reader of the §4 numbers should keep in mind:

- Refusal anchor ambiguity. The rubric's lowest anchor ("refuses or off-base") lumps together honest refusals to answer and substantively wrong predictions. Judges sometimes score refusals at 2 or 3 instead of 1, especially when the refusal recites related facts.